ReasonScape¶

ReasonScape is a research platform for investigating how reasoning-tuned language models process information.

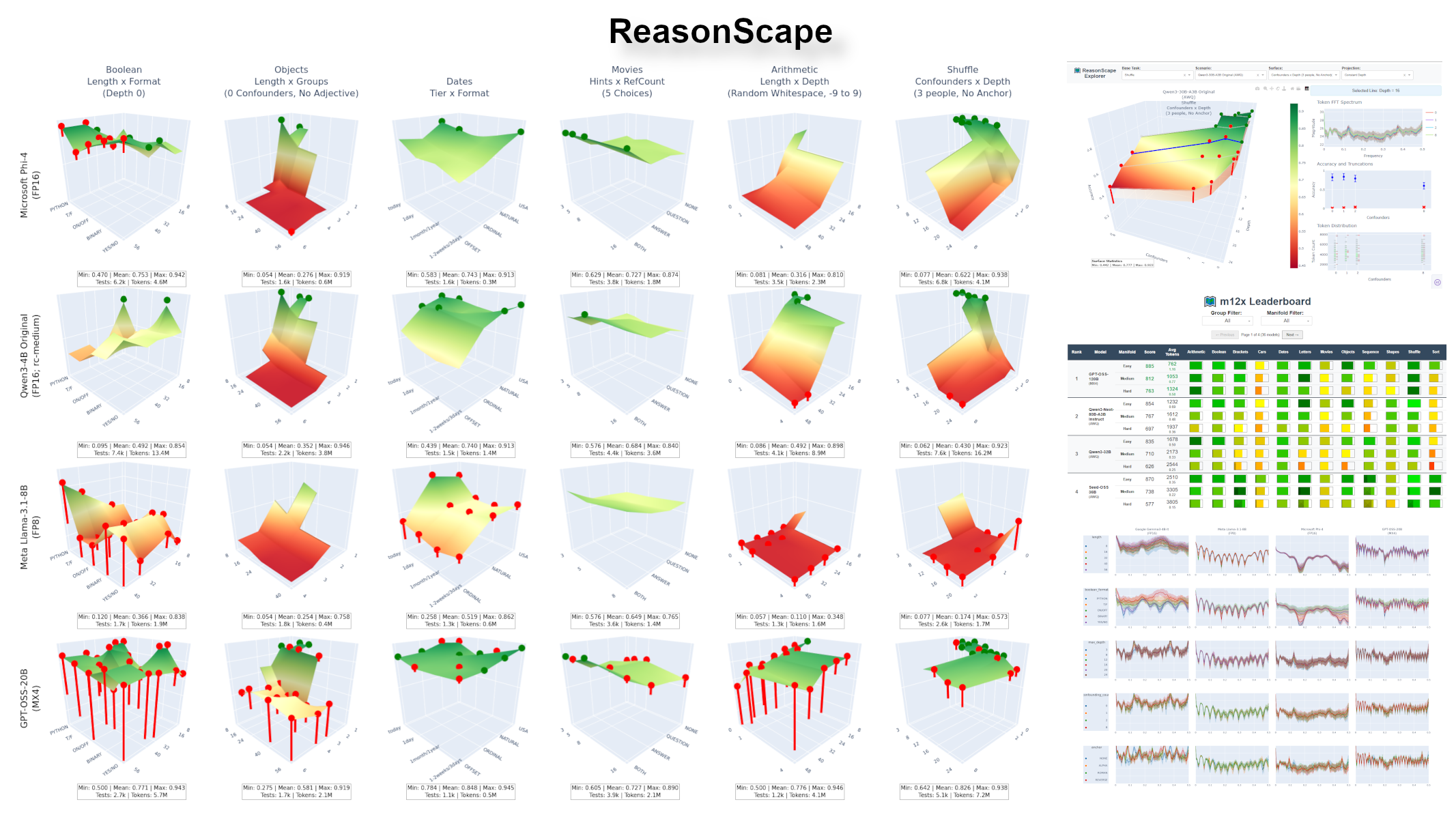

ReasonScape reveals cognitive architecture patterns invisible to traditional benchmarks: 3D reasoning landscapes (left), token-frequency spectral analysis (bottom right), and interactive exploration tools (top and middle right) enable systematic comparison of information processing capabilities across models and tasks.

🌐 Homepage: https://reasonscape.com/

🛠️ GitHub: the-crypt-keeper/reasonscape

Keywords: Large language models, AI evaluation, cognitive architectures, spectral analysis, statistical methodology, parametric testing, difficulty manifolds, information processing

Live Tools & Data¶

📊 Visualization Tools:

-

r12 Leaderboard: https://reasonscape.com/r12/leaderboard

-

r12 Explorer: https://reasonscape.com/r12/explorer (PC required)

📁 Raw Data:

- r12 Dataset: Download Guide | Direct Access (100+ models, 9B+ tokens)

ReasonScape V3¶

The ReasonScape documentation is organized into six chapters:

| Chapter | What It Is | Where to Learn More | |

|---|---|---|---|

| 1 | Challenges | Practical problems encountered in prior LLM evaluation systems | challenges.md |

| 2 | Insight | LLMs are not simply text generators, they're information processors | insight.md |

| 3 | Methodology | Systematic solutions that emerge from applying the Insight to the Challenges | architecture.md |

| 4 | Implementation | The Python codebase that makes it real | implementation.md |

| 5 | Reference Evaluation | r12 as the current reference evaluation | r12.md |

| 6 | Research Datasets | Worked examples showing the methodology in action | datasets.md |

Where to Start¶

Understand the Vision¶

Challenges - The problem statement (chapter 1)

- Fundamental challenges in current LLM evaluation

- Practical barriers encountered in prior systems

Insight - The paradigm shift (chapter 2)

- LLMs as information processors

- System architecture and transformation pipeline

Architecture - The methodology (chapter 3)

- Applies the Insight to the Challeneges

- Five-stage data processing pipeline

- Discovery-investigation research loop

Use the Datasets¶

r12 - The current reference evaluation (chapter 5)

- 12 reasoning tasks, 16k context

- ReasonScore v2 with bootstrap confidence intervals

Datasets - Research collections (chapter 6)

- Worked examples of complete investigations

- Templates for your own research

Workflow Guide - The Three P's

- Position: Ranking models ("Which is better?")

- Profile: Characterizing and diagnosing capabilities ("What can it do? Why does this fail?")

- Probe: Examining raw traces ("What does failure look like?")

Dive Deeper¶

Implementation - The Python codebase (chapter 4)

- Stage-by-stage implementation guide

- Deep-dive design documents (manifold, reasonscore)

- Tool references and workflows

Technical Details - Low-level algorithms

- Parametric test generation

- Statistical methodology

- FFT analysis and compression

Tools - Complete command reference

- All flags and formats for every CLI tool and webapp

- Filter syntax, output formats, probe loop classifications

Config - Experiment configuration

- Precision levels and sampling strategies

- Tasks (list, grid, manifold modes)

Templates & Samplers - Execution configuration

- Prompting strategies (templates)

- Generation parameters (samplers)

PointsDB - Data structure API

Tasks - Abstract task API and task overview

Citation¶

If you use ReasonScape in your research, please cite:

@software{reasonscape2025,

title={ReasonScape: Information Processing Evaluation for Large Language Models},

author={Mikhail Ravkine},

year={2025},

url={https://github.com/the-crypt-keeper/reasonscape}

}

License¶

MIT

Acknowledgments¶

ReasonScape builds upon insights from BIG-Bench Hard, lm-evaluation-harness, and the broader AI evaluation community.